With the boom of AI-generated text, images, and videos, both lawmakers and average internet users have been calling for more transparency. Though it might seem like a very reasonable ask to simply add a label (which it is), it is not actually an easy one, and the existing solutions, like AI-powered detection and watermarking, have some serious pitfalls.

As my colleague Melissa Heikkilä has written, most of the current technical solutions “don’t stand a chance against the latest generation of AI language models.” Nevertheless, the race to label and detect AI-generated content is on.

That’s where this protocol comes in. Started in 2021, C2PA (named for the group that created it, the Coalition for Content Provenance and Authenticity) is a set of new technical standards and freely available code that securely labels content with information clarifying where it came from.

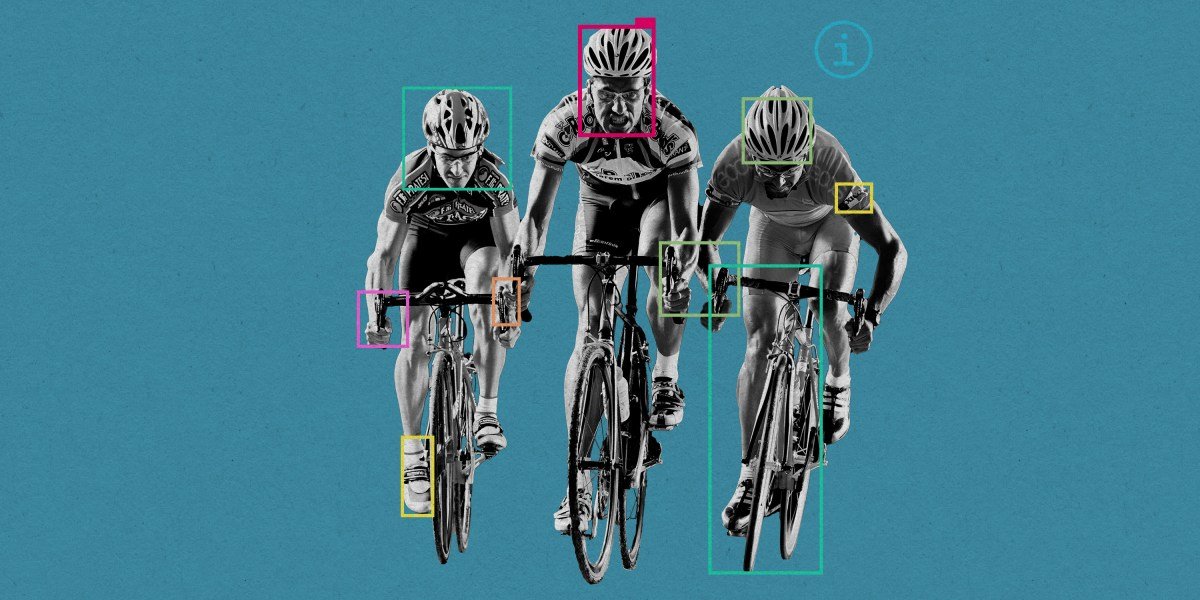

This means that an image, for example, is marked with information by the device it originated from (like a phone camera), by any editing tools (such as Photoshop), and ultimately by the social media platform that it gets uploaded to. Over time, this information creates a sort of history, all of which is logged.

The tech itself—and the ways in which C2PA is more secure than other AI-labeling alternatives—is pretty cool, though a bit complicated. I get more into it in my piece, but it’s perhaps easiest to think about it like a nutrition label (which is the preferred analogy of most people I spoke with). You can see an example of a deepfake video here with the label created by Truepic, a founding C2PA member, with Revel AI.

“The idea of provenance is marking the content in an interoperable and tamper-evident way so it can travel through the internet with that transparency, with that nutrition label,” says Mounir Ibrahim, the vice president of public affairs at Truepic.

When it first launched, C2PA was backed by a handful of prominent companies, including Adobe and Microsoft, but over the past six months, its membership has increased 56%. Just this week, the major media platform Shutterstock announced that it would use C2PA to label all of its AI-generated media.

It’s based on an opt-in approach, so groups that want to verify and disclose where content came from, like a newspaper or an advertiser, will choose to add the credentials to a piece of media.

Source link